Профессиональная дезинсекция — это эффективный способ борьбы с насекомыми, который позволяет надолго избавиться от

Мраморная крошка - это измельченный до определенного размера натуральный мрамор, который сочетает в себе

В данной статье мы рассмотрим, как проходит процесс регистрации товарного знака в нескольких ключевых

Забота о здоровье любимого пушистика начинается с комплексного обследования у ветеринара. Опытный врач подскажет,

Преимущество покупки Origin ключей заключается в возможности получения игр по более выгодным ценам, чем

Подход к изучению немецкого должен быть комплексным, сочетая изучение грамматики, словарного запаса, разговорной практики

Выбор дизайнов толстовок с собаками впечатляет своим многообразием. Они могут быть выполнены в различных

В статье рассказываем зачем водить собаку на занятия с кинологом. Какие есть реальные плюсы

Аудиокниги про животных открывают дверь в удивительный мир, где животные говорят человеческим голосом, испытывают

Для улучшения качества сна рекомендуется соблюдать ряд простых правил. Например, важно придерживаться регулярного графика

Сенсорные выключатели становятся все более популярными в современных домах благодаря своей функциональности и эстетическому

Сухой корм для собак является популярным и удобным вариантом питания для домашних питомцев. Он

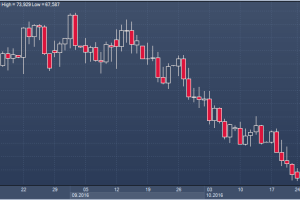

На сегодняшний день, курс евро к рублю продолжает оставаться в фокусе внимания инвесторов. Для

Сегодняшний мир технологий и Интернета предоставляет нам возможность с лёгкостью скачивать новые песни и

Как правильно выбрать недорогую стоматологию, где качественно и безболезненно лечат зубы?

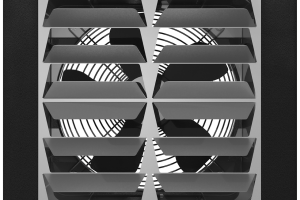

Дестратификаторы ГРЕЕРС особенно эффективны в больших промышленных и коммерческих помещениях, где проблема потери тепла

Ключ Steam - это уникальный код, который можно использовать для активации игр или программного

В Новосибирске с детьми можно не только весело провести время, но и вкусно покушать.

Груминг – это не только процесс ухода за шерстью, когтями и ушами домашних животных,

Медицинские справки являются важным документом, который подтверждает состояние здоровья человека в различных жизненных ситуациях.

Домашняя кошка требует особого ухода. Расскажем о важных аспектах заботы о питомце.

Московская Биржа (Мосбиржа) является крупнейшей биржей в России и важным финансовым центром, играющим ключевую

Подготовка к ОГЭ по математике — это не просто приобретение навыков решения задач, но

Выбор детской площадки – это важный этап, который должен учесть разнообразные потребности и интересы

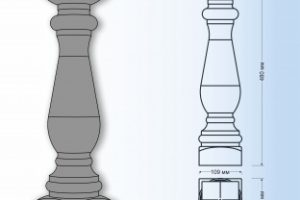

Балюстрады, играющие важную роль в создании эффектного архитектурного облика, достигают выдающегося уровня стиля и